Choosing a video ad measurement solution should feel clarifying. Instead, it often introduces more doubt than confidence.

Teams come into the buying process hoping for answers: clearer performance readouts, fewer internal debates, and data they can actually stand behind. What they often get is the opposite—more tools, more dashboards, more caveats, and more time spent explaining to their CMO why numbers don’t line up.

The problem isn’t just about where ads run anymore. Fragmentation has crept into the measurement solutions themselves and into the teams that rely on them.

Instead of enabling faster learning, measurement becomes something teams have to defend—to leadership, to finance, to anyone asking for proof. The data is there, but confidence isn’t.

How Teams End Up Here

Most teams don’t set out to build a broken measurement stack. They inherit one. The system they’re working with was built for a different era—one where linear dominated, and performance could be approximated more easily.

Today’s reality is different. Linear, streaming, and digital have their own buying processes, publishers, audiences, etc. but they’re still expected to roll up into one story. That’s where things start to break down, and the cracks show up at the worst possible moments:

- During in-flight optimizations.

- During performance readouts.

- When leadership asks, “What’s actually working?”

The instinctive response is predictable: add another tool, another layer, or another workaround. But you can’t fight fragmentation with more fragmentation.

And eventually, it shows up in the moments that matter most: when you’re asked to walk into a leadership meeting or CFO review and stand behind a number that doesn’t fully hold together.

Two Common Measurement Traps

Scenario #1: No Level Playing Field

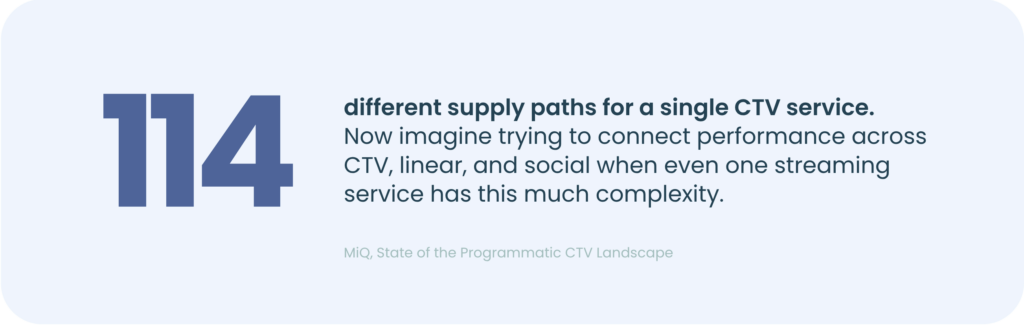

On paper, everything looks covered. You’re running ads across linear, streaming, digital and social. Your agency sends a linear report with reach and frequency. Your streaming partners—YouTube, Netflix, Hulu—each provide their own performance readouts, and so on.

Each platform shows you how it performed in isolation. But no one can tell you what actually matters: how much audience overlap exists across publishers, how often you’re reaching the same people, or what unique value each platform is really adding to your media plan.

So the same questions keep coming up, with no clear answers:

- Are we reaching new audiences or the same ones again?

- How many times are people actually seeing this campaign?

- Which platforms are driving unique value, and which are just duplicating it?

- What’s the true incremental value of one publisher vs. another?

Instead, every platform reports strong performance. Each one takes credit—often for the same conversions, with no clear way to validate what’s actually incremental.

What looks like efficiency in segmented reports starts to feel like waste across the full plan. Budgets stretch across more platforms, but better performance doesn’t necessarily follow. You’re left wondering how much of your investment is actually working, and how much is just overlapping.

So while your investment grows, clarity doesn’t. And for buyers, the friction builds fast. Data comes in too late to act on, creative performance gets flattened, and ROI is implied—not proven.

Scenario #2: The Multi-Vendor Juggling Act

This scenario usually starts with good intentions and a clear goal: measure the full funnel impact of your campaigns.

Teams want to understand how their video ads drive awareness, engagement, and conversions—together, not in silos. The expectation is simple: one view that connects the entire journey. But in practice, that’s not how measurement gets built.

Instead, teams assemble a stack of point solutions. One vendor for audience measurement. Another for brand lift. Another for MMM. Another for attribution. Each one answers a different part of the equation, in isolation. Each one operates as its own black box with different methodologies, definitions, and timelines. When the signals conflict, there’s no shared foundation to resolve them.

The result isn’t clear answers; it’s competing signals. Creative performance might look strong in one report, linear reach might appear solid in another, while attribution suggests underperformance. None of the views are necessarily wrong, but they don’t reconcile. So what metric do you optimize toward?

And the cost goes beyond confusion.

More vendors don’t just create noise, they create operational drag. More budget tied up in tools, more time spent reconciling conflicting numbers, and more resources pulled away from actual optimization. Teams aren’t acting; they’re constantly aligning. And that delay comes at a real cost to the business.

Both of these scenarios lead to the same place: more data, more tools, and less confidence in what’s actually working.

Both Traps Have the Same Fix

So what does a fully unified measurement solution actually look like?

It’s not about consolidating everything into a single dashboard. It’s about eliminating the inconsistencies that make your current measurement stack impossible to trust.

It means every part of the funnel, every investment, and every ad–for you and your competitors–is measured on the same foundation, so you can see creative impact, understand incremental reach, and tie outcomes back to the exposures that influenced them.

That unlocks the shift: from spending hours reconciling conflicting reports to actually trusting your data and using it to make decisions while campaigns are still live.

Why We Built The Ultimate Buyer’s Guide to Video Ad Measurement

We built a guide to give buyers language for what they’re already feeling, and a simple way to evaluate whether measurement is reducing friction or adding to it.

Inside, you’ll find a practical framework for what unified measurement should look like: how to connect creative, audience, and outcomes in a way that holds up under scrutiny—and actually drives decisions.

As AI solutions enter the mix, that foundation matters even more. AI can surface insights in seconds, but if the underlying data is fragmented across several sources, it just scales the problem.

It breaks down what most teams miss:

- Where “efficiency” falls apart without true deduplication.

- How to evaluate whether a solution can deliver full-funnel clarity.

- How to quantify the real cost of a fragmented stack.

- What to look for in AI-enabled solutions to drive clarity, not noise.

Are your tools clarifying performance, or forcing you to reconcile it?

Find out in The Ultimate Buyer’s Guide to Video Ad Measurement.